Building Safer Platforms with Intelligent Moderation

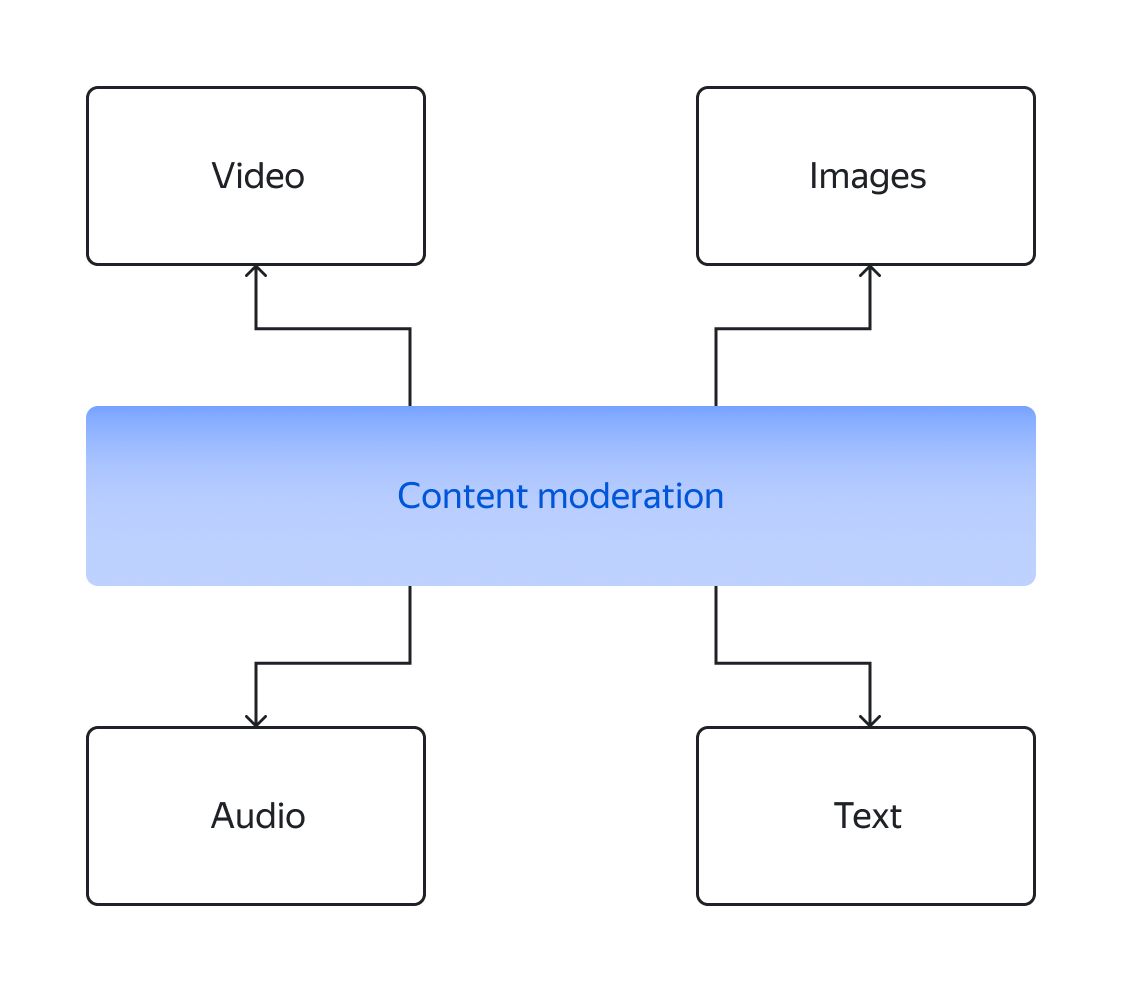

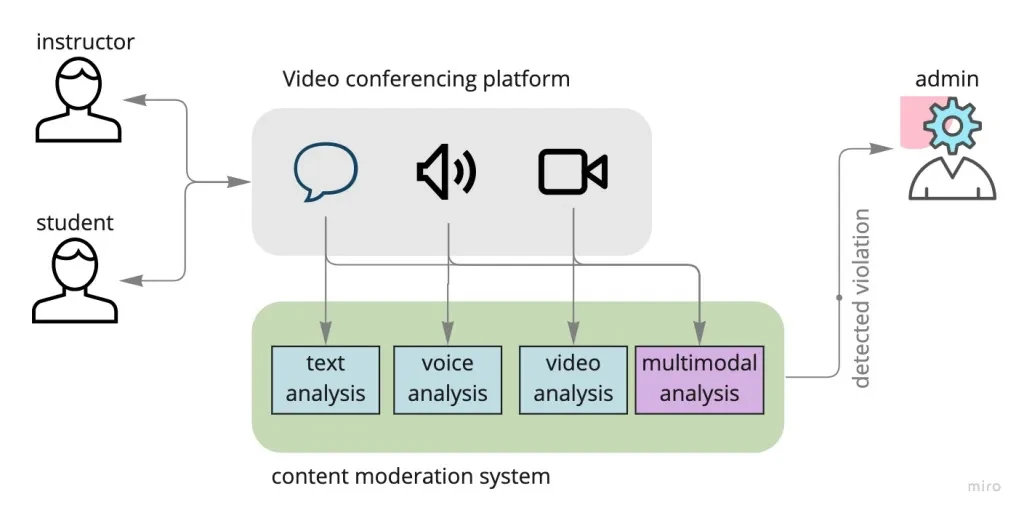

AI-driven content moderation systems analyze text, images, audio, and video to identify violations such as spam, hate speech, abuse, or misinformation. By automating moderation, platforms can handle large volumes of content efficiently while maintaining quality and safety.

Core Moderation Capabilities

- Text Filtering: Detect harmful language, spam, and inappropriate content.

- Image & Video Moderation: Identify unsafe or restricted visual content.

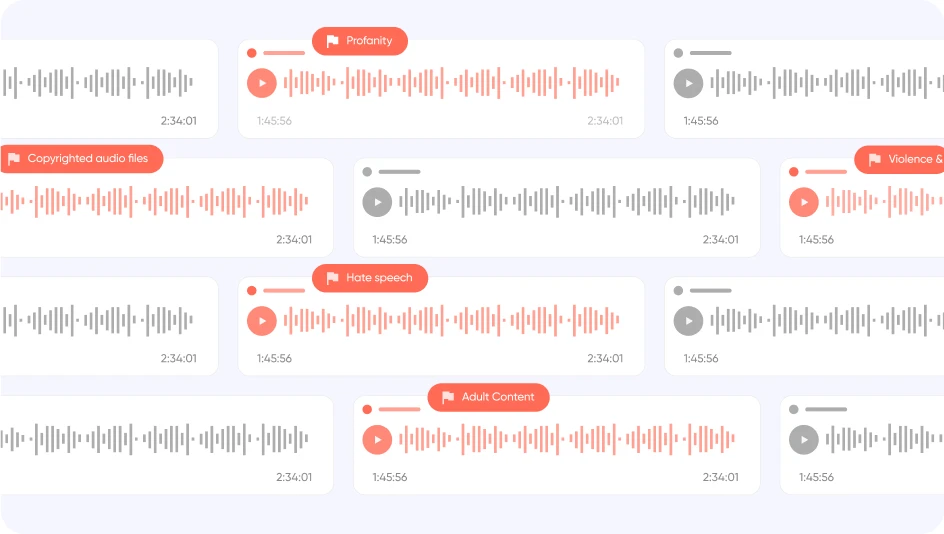

- Audio Monitoring: Analyze voice data for abusive or sensitive content.

- Real-Time Moderation: Monitor and act instantly on live content streams.

- Policy Enforcement: Apply platform guidelines consistently and accurately.

Key Use Cases

- Social Media Platforms to filter harmful user-generated content.

- E-commerce Websites to manage product reviews and listings.

- Online Communities to ensure safe and respectful discussions.

- Gaming Platforms to prevent toxic behavior and abuse.

- Streaming Services to monitor live chats and interactions.

AI-powered moderation reduces manual effort, improves response time, and ensures consistent enforcement of rules across platforms with millions of users.

Content moderation is not just about filtering — it’s about building trust, safety, and a better user experience in the digital world.

Advanced Moderation Intelligence

By combining AI with human review systems, businesses can achieve higher accuracy and context understanding. These hybrid systems continuously learn and adapt, improving their ability to detect nuanced and evolving content patterns.